3 This document describes the high-level architecture of rust-analyzer.

4 If you want to familiarize yourself with the code base, you are just in the right place!

6 See also the [guide](./guide.md), which walks through a particular snapshot of rust-analyzer code base.

8 Yet another resource is this playlist with videos about various parts of the analyzer:

10 https://www.youtube.com/playlist?list=PL85XCvVPmGQho7MZkdW-wtPtuJcFpzycE

12 Note that the guide and videos are pretty dated, this document should be, in general, fresher.

14 See also these implementation-related blog posts:

16 * https://rust-analyzer.github.io/blog/2019/11/13/find-usages.html

17 * https://rust-analyzer.github.io/blog/2020/07/20/three-architectures-for-responsive-ide.html

18 * https://rust-analyzer.github.io/blog/2020/09/16/challeging-LR-parsing.html

19 * https://rust-analyzer.github.io/blog/2020/09/28/how-to-make-a-light-bulb.html

20 * https://rust-analyzer.github.io/blog/2020/10/24/introducing-ungrammar.html

24

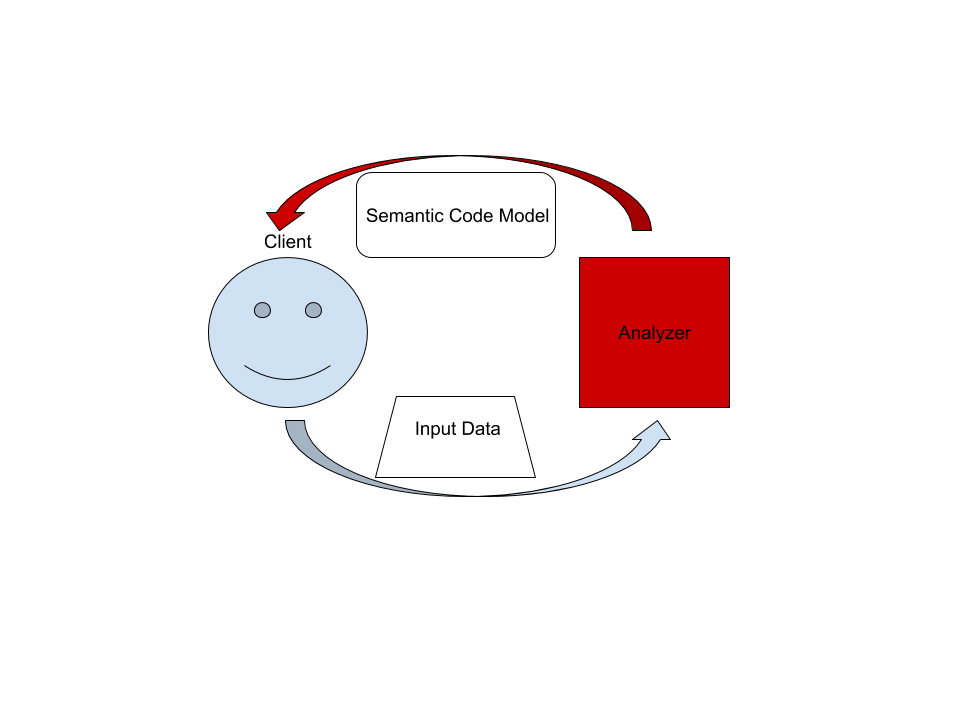

26 On the highest level, rust-analyzer is a thing which accepts input source code from the client and produces a structured semantic model of the code.

28 More specifically, input data consists of a set of test files (`(PathBuf, String)` pairs) and information about project structure, captured in the so called `CrateGraph`.

29 The crate graph specifies which files are crate roots, which cfg flags are specified for each crate and what dependencies exist between the crates.

30 This is the input (ground) state.

31 The analyzer keeps all this input data in memory and never does any IO.

32 Because the input data is source code, which typically measures in tens of megabytes at most, keeping everything in memory is OK.

34 A "structured semantic model" is basically an object-oriented representation of modules, functions and types which appear in the source code.

35 This representation is fully "resolved": all expressions have types, all references are bound to declarations, etc.

36 This is derived state.

38 The client can submit a small delta of input data (typically, a change to a single file) and get a fresh code model which accounts for changes.

40 The underlying engine makes sure that model is computed lazily (on-demand) and can be quickly updated for small modifications.

44 `crates/rust-analyzer/src/bin/main.rs` contains the main function which spawns LSP.

45 This is *the* entry point, but it front-loads a lot of complexity, so its fine to just skim through it.

47 `crates/rust-analyzer/src/handlers.rs` implements all LSP requests and is a great place to start if you are already familiar with LSP.

49 `Analysis` and `AnalysisHost` types define the main API.

53 This section talks briefly about various important directories and data structures.

54 Pay attention to the **Architecture Invariant** sections.

55 They often talk about things which are deliberately absent in the source code.

57 Note also which crates are **API Boundaries**.

58 Remember, [rules at the boundary are different](https://www.tedinski.com/2018/02/06/system-boundaries.html).

62 This is rust-analyzer's "build system".

63 We use cargo to compile rust code, but there are also various other tasks, like release management or local installation.

64 They are handled by Rust code in the xtask directory.

72 rust-analyzer independent libraries which we publish to crates.io.

73 It's not heavily utilized at the moment.

77 It is a hand-written recursive descent parser, which produces a sequence of events like "start node X", "finish node Y".

79 [kotlin's parser](https://github.com/JetBrains/kotlin/blob/4d951de616b20feca92f3e9cc9679b2de9e65195/compiler/frontend/src/org/jetbrains/kotlin/parsing/KotlinParsing.java),

80 which is a good source of inspiration for dealing with syntax errors and incomplete input.

81 Original [libsyntax parser](https://github.com/rust-lang/rust/blob/6b99adeb11313197f409b4f7c4083c2ceca8a4fe/src/libsyntax/parse/parser.rs) is what we use for the definition of the Rust language.

82 `TreeSink` and `TokenSource` traits bridge the tree-agnostic parser from `grammar` with `rowan` trees.

84 **Architecture Invariant:** the parser is independent of the particular tree structure and particular representation of the tokens.

85 It transforms one flat stream of events into another flat stream of events.

86 Token independence allows us to parse out both text-based source code and `tt`-based macro input.

87 Tree independence allows us to more easily vary the syntax tree implementation.

88 It should also unlock efficient light-parsing approaches.

89 For example, you can extract the set of names defined in a file (for typo correction) without building a syntax tree.

91 **Architecture Invariant:** parsing never fails, the parser produces `(T, Vec<Error>)` rather than `Result<T, Error>`.

95 Rust syntax tree structure and parser.

96 See [RFC](https://github.com/rust-lang/rfcs/pull/2256) and [./syntax.md](./syntax.md) for some design notes.

98 - [rowan](https://github.com/rust-analyzer/rowan) library is used for constructing syntax trees.

99 - `ast` provides a type safe API on top of the raw `rowan` tree.

100 - `ungrammar` description of the grammar, which is used to generate `syntax_kinds` and `ast` modules, using `cargo xtask codegen` command.

102 Tests for ra_syntax are mostly data-driven.

103 `test_data/parser` contains subdirectories with a bunch of `.rs` (test vectors) and `.txt` files with corresponding syntax trees.

104 During testing, we check `.rs` against `.txt`.

105 If the `.txt` file is missing, it is created (this is how you update tests).

106 Additionally, running `cargo xtask codegen` will walk the grammar module and collect all `// test test_name` comments into files inside `test_data/parser/inline` directory.

108 To update test data, run with `UPDATE_EXPECT` variable:

111 env UPDATE_EXPECT=1 cargo qt

114 After adding a new inline test you need to run `cargo xtest codegen` and also update the test data as described above.

116 Note [`api_walkthrough`](https://github.com/rust-analyzer/rust-analyzer/blob/2fb6af89eb794f775de60b82afe56b6f986c2a40/crates/ra_syntax/src/lib.rs#L190-L348)

117 in particular: it shows off various methods of working with syntax tree.

119 See [#93](https://github.com/rust-analyzer/rust-analyzer/pull/93) for an example PR which fixes a bug in the grammar.

121 **Architecture Invariant:** `syntax` crate is completely independent from the rest of rust-analyzer. It knows nothing about salsa or LSP.

122 This is important because it is possible to make useful tooling using only the syntax tree.

123 Without semantic information, you don't need to be able to _build_ code, which makes the tooling more robust.

124 See also https://web.stanford.edu/~mlfbrown/paper.pdf.

125 You can view the `syntax` crate as an entry point to rust-analyzer.

126 `syntax` crate is an **API Boundary**.

128 **Architecture Invariant:** syntax tree is a value type.

129 The tree is fully determined by the contents of its syntax nodes, it doesn't need global context (like an interner) and doesn't store semantic info.

130 Using the tree as a store for semantic info is convenient in traditional compilers, but doesn't work nicely in the IDE.

131 Specifically, assists and refactors require transforming syntax trees, and that becomes awkward if you need to do something with the semantic info.

133 **Architecture Invariant:** syntax tree is built for a single file.

134 This is to enable parallel parsing of all files.

136 **Architecture Invariant:** Syntax trees are by design incomplete and do not enforce well-formedness.

137 If an AST method returns an `Option`, it *can* be `None` at runtime, even if this is forbidden by the grammar.

141 We use the [salsa](https://github.com/salsa-rs/salsa) crate for incremental and on-demand computation.

142 Roughly, you can think of salsa as a key-value store, but it can also compute derived values using specified functions. The `base_db` crate provides basic infrastructure for interacting with salsa.

143 Crucially, it defines most of the "input" queries: facts supplied by the client of the analyzer.

144 Reading the docs of the `base_db::input` module should be useful: everything else is strictly derived from those inputs.

146 **Architecture Invariant:** particularities of the build system are *not* the part of the ground state.

147 In particular, `base_db` knows nothing about cargo.

148 The `CrateGraph` structure is used to represent the dependencies between the crates abstractly.

150 **Architecture Invariant:** `base_db` doesn't know about file system and file paths.

151 Files are represented with opaque `FileId`, there's no operation to get an `std::path::Path` out of the `FileId`.

153 ### `crates/hir_expand`, `crates/hir_def`, `crates/hir_ty`

155 These crates are the *brain* of rust-analyzer.

156 This is the compiler part of the IDE.

158 `hir_xxx` crates have a strong ECS flavor, in that they work with raw ids and directly query the database.

159 There's little abstraction here.

160 These crates integrate deeply with salsa and chalk.

162 Name resolution, macro expansion and type inference all happen here.

163 These crates also define various intermediate representations of the core.

165 `ItemTree` condenses a single `SyntaxTree` into a "summary" data structure, which is stable over modifications to function bodies.

167 `DefMap` contains the module tree of a crate and stores module scopes.

169 `Body` stores information about expressions.

171 **Architecture Invariant:** these crates are not, and will never be, an api boundary.

173 **Architecture Invariant:** these crates explicitly care about being incremental.

174 The core invariant we maintain is "typing inside a function's body never invalidates global derived data".

175 i.e., if you change the body of `foo`, all facts about `bar` should remain intact.

177 **Architecture Invariant:** hir exists only in context of particular crate instance with specific CFG flags.

178 The same syntax may produce several instances of HIR if the crate participates in the crate graph more than once.

182 The top-level `hir` crate is an **API Boundary**.

183 If you think about "using rust-analyzer as a library", `hir` crate is most likely the façade you'll be talking to.

185 It wraps ECS-style internal API into a more OO-flavored API (with an extra `db` argument for each call).

187 **Architecture Invariant:** `hir` provides a static, fully resolved view of the code.

188 While internal `hir_*` crates _compute_ things, `hir`, from the outside, looks like an inert data structure.

190 `hir` also handles the delicate task of going from syntax to the corresponding `hir`.

191 Remember that the mapping here is one-to-many.

192 See `Semantics` type and `source_to_def` module.

194 Note in particular a curious recursive structure in `source_to_def`.

195 We first resolve the parent _syntax_ node to the parent _hir_ element.

196 Then we ask the _hir_ parent what _syntax_ children does it have.

197 Then we look for our node in the set of children.

199 This is the heart of many IDE features, like goto definition, which start with figuring out the hir node at the cursor.

200 This is some kind of (yet unnamed) uber-IDE pattern, as it is present in Roslyn and Kotlin as well.

204 The `ide` crate builds on top of `hir` semantic model to provide high-level IDE features like completion or goto definition.

205 It is an **API Boundary**.

206 If you want to use IDE parts of rust-analyzer via LSP, custom flatbuffers-based protocol or just as a library in your text editor, this is the right API.

208 **Architecture Invariant:** `ide` crate's API is build out of POD types with public fields.

209 The API uses editor's terminology, it talks about offsets and string labels rather than in terms of definitions or types.

210 It is effectively the view in MVC and viewmodel in [MVVM](https://en.wikipedia.org/wiki/Model%E2%80%93view%E2%80%93viewmodel).

211 All arguments and return types are conceptually serializable.

212 In particular, syntax tress and and hir types are generally absent from the API (but are used heavily in the implementation).

213 Shout outs to LSP developers for popularizing the idea that "UI" is a good place to draw a boundary at.

215 `ide` is also the first crate which has the notion of change over time.

216 `AnalysisHost` is a state to which you can transactionally `apply_change`.

217 `Analysis` is an immutable snapshot of the state.

219 Internally, `ide` is split across several crates. `ide_assists`, `ide_completion` and `ide_ssr` implement large isolated features.

220 `ide_db` implements common IDE functionality (notably, reference search is implemented here).

221 The `ide` contains a public API/façade, as well as implementation for a plethora of smaller features.

223 **Architecture Invariant:** `ide` crate strives to provide a _perfect_ API.

224 Although at the moment it has only one consumer, the LSP server, LSP *does not* influence it's API design.

225 Instead, we keep in mind a hypothetical _ideal_ client -- an IDE tailored specifically for rust, every nook and cranny of which is packed with Rust-specific goodies.

227 ### `crates/rust-analyzer`

229 This crate defines the `rust-analyzer` binary, so it is the **entry point**.

230 It implements the language server.

232 **Architecture Invariant:** `rust-analyzer` is the only crate that knows about LSP and JSON serialization.

233 If you want to expose a datastructure `X` from ide to LSP, don't make it serializable.

234 Instead, create a serializable counterpart in `rust-analyzer` crate and manually convert between the two.

236 `GlobalState` is the state of the server.

237 The `main_loop` defines the server event loop which accepts requests and sends responses.

238 Requests that modify the state or might block user's typing are handled on the main thread.

239 All other requests are processed in background.

241 **Architecture Invariant:** the server is stateless, a-la HTTP.

242 Sometimes state needs to be preserved between requests.

243 For example, "what is the `edit` for the fifth completion item of the last completion edit?".

244 For this, the second request should include enough info to re-create the context from scratch.

245 This generally means including all the parameters of the original request.

247 `reload` module contains the code that handles configuration and Cargo.toml changes.

248 This is a tricky business.

250 **Architecture Invariant:** `rust-analyzer` should be partially available even when the build is broken.

251 Reloading process should not prevent IDE features from working.

253 ### `crates/toolchain`, `crates/project_model`, `crates/flycheck`

255 These crates deal with invoking `cargo` to learn about project structure and get compiler errors for the "check on save" feature.

257 They use `crates/path` heavily instead of `std::path`.

258 A single `rust-analyzer` process can serve many projects, so it is important that server's current directory does not leak.

260 ### `crates/mbe`, `crates/tt`, `crates/proc_macro_api`, `crates/proc_macro_srv`

262 These crates implement macros as token tree -> token tree transforms.

263 They are independent from the rest of the code.

265 `tt` crate defined `TokenTree`, a single token or a delimited sequence of token trees.

266 `mbe` crate contains tools for transforming between syntax trees and token tree.

267 And it also handles the actual parsing and expansion of declarative macro (a-la "Macros By Example" or mbe).

269 For proc macros, the client-server model are used.

270 We pass an argument `--proc-macro` to `rust-analyzer` binary to start a separate process (`proc_macro_srv`).

271 And the client (`proc_macro_api`) provides an interface to talk to that server separately.

273 And then token trees are passed from client, and the server will load the corresponding dynamic library (which built by `cargo`).

274 And due to the fact the api for getting result from proc macro are always unstable in `rustc`,

275 we maintain our own copy (and paste) of that part of code to allow us to build the whole thing in stable rust.

277 **Architecture Invariant:**

278 Bad proc macros may panic or segfault accidentally. So we run it in another process and recover it from fatal error.

279 And they may be non-deterministic which conflict how `salsa` works, so special attention is required.

283 This crate is responsible for parsing, evaluation and general definition of `cfg` attributes.

285 ### `crates/vfs`, `crates/vfs-notify`

287 These crates implement a virtual file system.

288 They provide consistent snapshots of the underlying file system and insulate messy OS paths.

290 **Architecture Invariant:** vfs doesn't assume a single unified file system.

291 i.e., a single rust-analyzer process can act as a remote server for two different machines, where the same `/tmp/foo.rs` path points to different files.

292 For this reason, all path APIs generally take some existing path as a "file system witness".

296 This crate contains various non-rust-analyzer specific utils, which could have been in std, as well

297 as copies of unstable std items we would like to make use of already, like `std::str::split_once`.

301 This crate contains utilities for CPU and memory profiling.

304 ## Cross-Cutting Concerns

306 This sections talks about the things which are everywhere and nowhere in particular.

310 Some of the components of this repository are generated through automatic processes.

311 `cargo xtask codegen` runs all generation tasks.

312 Generated code is generally committed to the git repository.

313 There are tests to check that the generated code is fresh.

315 In particular, we generate:

317 * API for working with syntax trees (`syntax::ast`, the [`ungrammar`](https://github.com/rust-analyzer/ungrammar) crate).

318 * Various sections of the manual:

324 * Documentation tests for assists

326 **Architecture Invariant:** we avoid bootstrapping.

327 For codegen we need to parse Rust code.

328 Using rust-analyzer for that would work and would be fun, but it would also complicate the build process a lot.

329 For that reason, we use syn and manual string parsing.

333 Let's say that the IDE is in the process of computing syntax highlighting, when the user types `foo`.

335 `rust-analyzer`s answer is that the highlighting process should be cancelled -- its results are now stale, and it also blocks modification of the inputs.

337 The salsa database maintains a global revision counter.

338 When applying a change, salsa bumps this counter and waits until all other threads using salsa finish.

339 If a thread does salsa-based computation and notices that the counter is incremented, it panics with a special value (see `Canceled::throw`).

340 That is, rust-analyzer requires unwinding.

342 `ide` is the boundary where the panic is caught and transformed into a `Result<T, Cancelled>`.

346 Rust Analyzer has three interesting [system boundaries](https://www.tedinski.com/2018/04/10/making-tests-a-positive-influence-on-design.html) to concentrate tests on.

348 The outermost boundary is the `rust-analyzer` crate, which defines an LSP interface in terms of stdio.

349 We do integration testing of this component, by feeding it with a stream of LSP requests and checking responses.

350 These tests are known as "heavy", because they interact with Cargo and read real files from disk.

351 For this reason, we try to avoid writing too many tests on this boundary: in a statically typed language, it's hard to make an error in the protocol itself if messages are themselves typed.

352 Heavy tests are only run when `RUN_SLOW_TESTS` env var is set.

354 The middle, and most important, boundary is `ide`.

355 Unlike `rust-analyzer`, which exposes API, `ide` uses Rust API and is intended for use by various tools.

356 A typical test creates an `AnalysisHost`, calls some `Analysis` functions and compares the results against expectation.

358 The innermost and most elaborate boundary is `hir`.

359 It has a much richer vocabulary of types than `ide`, but the basic testing setup is the same: we create a database, run some queries, assert result.

361 For comparisons, we use the `expect` crate for snapshot testing.

363 To test various analysis corner cases and avoid forgetting about old tests, we use so-called marks.

364 See the `marks` module in the `test_utils` crate for more.

366 **Architecture Invariant:** rust-analyzer tests do not use libcore or libstd.

367 All required library code must be a part of the tests.

368 This ensures fast test execution.

370 **Architecture Invariant:** tests are data driven and do not test the API.

371 Tests which directly call various API functions are a liability, because they make refactoring the API significantly more complicated.

372 So most of the tests look like this:

375 fn check(input: &str, expect: expect_test::Expect) {

376 // The single place that actually exercises a particular API

382 check("foo", expect![["bar"]]);

387 check("spam", expect![["eggs"]]);

389 // ...and a hundred more tests that don't care about the specific API at all.

392 To specify input data, we use a single string literal in a special format, which can describe a set of rust files.

393 See the `Fixture` type.

395 **Architecture Invariant:** all code invariants are tested by `#[test]` tests.

396 There's no additional checks in CI, formatting and tidy tests are run with `cargo test`.

398 **Architecture Invariant:** tests do not depend on any kind of external resources, they are perfectly reproducible.

401 ### Performance Testing

403 TBA, take a look at the `metrics` xtask and `#[test] fn benchmark_xxx()` functions.

407 **Architecture Invariant:** core parts of rust-analyzer (`ide`/`hir`) don't interact with the outside world and thus can't fail.

408 Only parts touching LSP are allowed to do IO.

410 Internals of rust-analyzer need to deal with broken code, but this is not an error condition.

411 rust-analyzer is robust: various analysis compute `(T, Vec<Error>)` rather than `Result<T, Error>`.

413 rust-analyzer is a complex long-running process.

414 It will always have bugs and panics.

415 But a panic in an isolated feature should not bring down the whole process.

416 Each LSP-request is protected by a `catch_unwind`.

417 We use `always` and `never` macros instead of `assert` to gracefully recover from impossible conditions.

421 rust-analyzer is a long-running process, so its important to understand what's going on inside.

422 We have several instruments for that.

424 The event loop that runs rust-analyzer is very explicit.

425 Rather than spawning futures or scheduling callbacks (open), the event loop accepts an `enum` of possible events (closed).

426 It's easy to see all the things that trigger rust-analyzer processing, together with their performance

428 rust-analyzer includes a simple hierarchical profiler (`hprof`).

429 It is enabled with `RA_PROFILE='*>50` env var (log all (`*`) actions which take more than `50` ms) and produces output like:

432 85ms - handle_completion

433 68ms - import_on_the_fly

434 67ms - import_assets::search_for_relative_paths

435 0ms - crate_def_map:wait (804 calls)

436 0ms - find_path (16 calls)

437 2ms - find_similar_imports (1 calls)

438 0ms - generic_params_query (334 calls)

439 59ms - trait_solve_query (186 calls)

440 0ms - Semantics::analyze_impl (1 calls)

441 1ms - render_resolution (8 calls)

442 0ms - Semantics::analyze_impl (5 calls)

445 This is cheap enough to enable in production.

448 Similarly, we save live object counting (`RA_COUNT=1`).

449 It is not cheap enough to enable in prod, and this is a bug which should be fixed.